The shift in four numbers

Why networking has become the critical variable

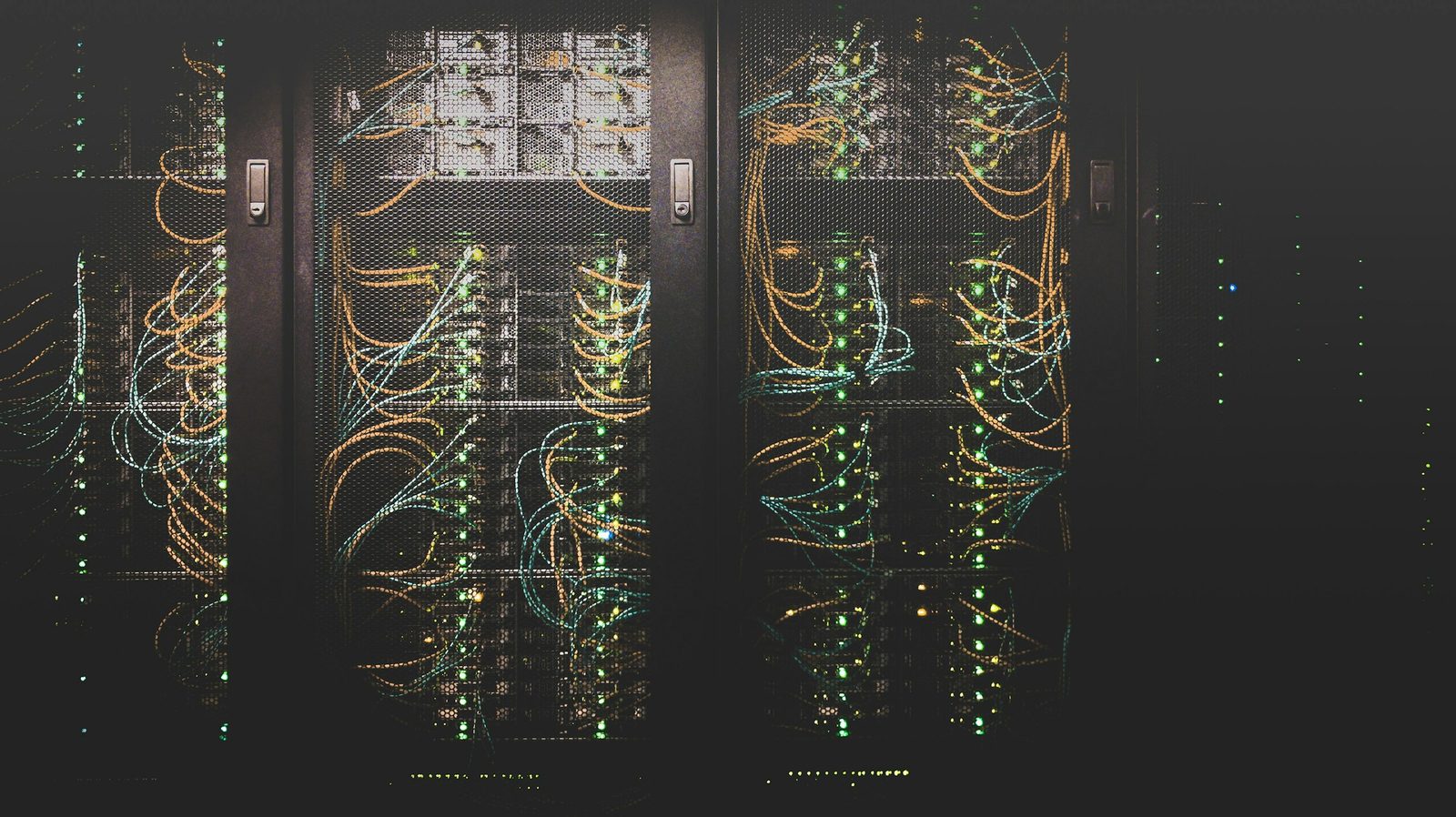

The first phase of the AI infrastructure build-out was defined by the race for accelerator chips. The next phase will be defined by the race to connect them. Every GPU requires connections to function in a cluster — and as AI systems scale from thousands to hundreds of thousands to millions of accelerators, the networking layer becomes as critical as the compute layer itself.

A single AI accelerator operating in isolation delivers a fraction of its potential value. Networking is what transforms individual chips into cooperative computing systems — enabling the seamless data exchange, low latency, and high bandwidth that large model training and inference require. Without it, compute investment is stranded.

The investment community has been slower to focus on this layer than on chips and data-centre construction. That is beginning to change. The specifications of next-generation AI server platforms — from current Blackwell-class systems through the coming Rubin and Rubin Ultra generations — imply networking dollar content per computing unit growing by an order of magnitude or more. This is not a marginal upgrade. It is a structural shift in where capital flows within the AI infrastructure stack.

The total addressable market for AI networking is projected to expand roughly ninefold, from approximately $15bn in the current generation to $154bn in the Rubin Ultra era — driven by rising dollar content per rack and growing deployment volumes. Scale-up networking — connecting GPUs within and across racks — will be the dominant growth driver, accounting for ~69% of peak TAM. Co-packaged optics, commercially launching in 2026, alone represents a $91bn opportunity. Silicon photonics is displacing legacy EML in optical modules, with penetration rising from 6% in early 2024 to ~46% by late 2028. And light-source supply — particularly for continuous-wave lasers — is expected to remain structurally tight through 2027.

The AI build-out spent its first phase arguing about who makes the best chip. Its next phase will be decided by who moves data fastest, at the lowest power, at the largest scale.

Critically, this growth is broad-based — it does not depend on any single technology configuration prevailing. Whether clusters scale primarily through scale-out switching or scale-up supernode architectures, whether co-packaged optics or pluggable modules dominate, all configurations are expected to benefit from strong structural demand for higher bandwidth, lower latency, and better power efficiency. The question for investors is not whether optical networking grows, but where within it value concentrates.

Understanding the two dimensions of AI networking

AI cluster networking operates across two distinct dimensions, each with its own technology requirements, component mix, and growth trajectory. Understanding the distinction is essential to mapping the investment opportunity.

| Dimension | Definition | Primary media | Share of peak TAM |

|---|---|---|---|

| Scale-up | Adding GPU and compute resources within the same device or rack; extends to cross-rack "supernode" configurations where inter-rack speeds approach intra-rack performance. | Copper cables → PCB midplane → CPO (progressive generations) | 69% · ~$106bn |

| Scale-out | Adding more equipment and connecting via switching technologies — the traditional mode of network expansion used across large AI training clusters. | Pluggable optical modules → CPO TOR switches (1.6T → 3.2T) | 31% · ~$48bn |

| Scale-across | Connecting servers across data centres in geographically distinct locations — an emerging frontier enabled by high-speed Ethernet and optical interconnect. | Hollow-core fibre; dedicated Ethernet switches and NICs | Incremental — not yet in base TAM |

The key insight is that scale-up networking — historically dominated by copper and largely overlooked as an investment theme — is undergoing a complete architectural transformation. As GPUs migrate from single-rack configurations to multi-rack supernode designs with hundreds of accelerators operating as a single logical unit, the networking requirements within those configurations become dramatically more demanding. This is the origin of the 29× dollar-content increase projected from the current generation to Rubin Ultra.

Scale-out networking, meanwhile, continues to evolve in its own right — with attach rates per GPU roughly doubling between generations and data rates migrating from 800G to 1.6T to 3.2T. Even where scale-out appears to be a "known" market, the magnitude of content growth per computing unit is substantially underappreciated.

Four generations, one direction: networking content compounds

The trajectory from current-generation platforms to Rubin Ultra represents one of the most dramatic step-changes in infrastructure component demand in the history of the technology industry. Each generation raises the bandwidth ceiling, expands the optical footprint, and introduces new connection modalities.

GB300 NVL72

The current production baseline. Scale-up runs entirely on copper cable; scale-out leans on pluggable 1.6T modules. ~216 optical modules per computing unit. CPO not yet introduced.

Vera Rubin NVL72

Optical module count per computing unit doubles to ~432 as attach ratios rise. First commercial appearance of CPO on the scale-out side, beginning at 0–25% penetration. Scale-up remains copper.

Rubin Ultra NVL144

PCB midplane replaces backplane copper for scale-up; scale-out migrates to 3.2T with ~29% CPO penetration. Networking content per unit crosses $1m for the first time.

Rubin Ultra NVL576

The multi-rack supernode. Eight racks operate as a single logical computing unit, connected by CPO-driven scale-up optics. Content per computing unit reaches $9.4bn — a 29× step-up from GB300.

| Component | GB300 NVL72 | Vera Rubin A | Rubin Ultra NVL144 | Rubin Ultra NVL576 |

|---|---|---|---|---|

| Scale-up networking | ||||

| Copper cable — backplane | $93k | $93k | — | $156k |

| Copper cable — flyover / switch tray | $47k | $47k | $156k | $78k |

| PCB midplane | — | — | $225k | — |

| CPO optical engine & FAU (scale-up) | — | — | — | $324k |

| Scale-up subtotal | $140k | $140k | $381k | $803k |

| Scale-out networking | ||||

| Pluggable optical modules | $173k | $346k | $491k | $245k |

| CPO optical engine & FAU (scale-out) | — | — | $200k | $100k |

| Fibre cable, MPO & other | $2k | $4k | $41k | $21k |

| Scale-out subtotal | $175k | $349k | $732k | $366k |

| Total networking content / rack | $315k | $489k | $1,113k | $1,169k |

The connection-media hierarchy: PCB, copper, and optics

AI data-centre connections are not a single technology — they are a hierarchy of solutions optimised for different distances, speeds, and cost points. Understanding where each medium excels and where it fails determines how the networking stack evolves across generations.

The decisive trend is that optics are migrating inward — from long-distance scale-out connections toward the shorter, denser, higher-bandwidth environment of scale-up networking. This migration is what drives the disproportionate growth in networking dollar content across generations. It is not that scale-out optics contracts; it is that scale-up optics is a category that barely existed in the previous generation and is becoming one of the largest line items in the AI infrastructure bill of materials.

Co-packaged optics: the technology defining the next phase

Co-packaged optics — CPO — is the most consequential new technology entering commercial deployment in AI networking in 2026. It places optical engines as close as physically possible to the chip, shortening electrical signal paths from several centimetres to millimetre scale and fundamentally changing the power, latency, and bandwidth characteristics of the connection.

Conventional pluggable optical modules sit at the edge of the switch chassis — connected via a multi-centimetre electrical path that limits bandwidth, consumes power through digital signal processors and retimers, and introduces latency. CPO moves the optical engine to the package substrate, directly adjacent to the switch ASIC or accelerator chip. The shorter path eliminates conversion stages, cuts power consumption, and enables the high-bandwidth, short-distance connections that next-generation AI clusters demand.

CPO co-packaged with the switch ASIC. Available from leading switch vendors in early 2026. Dominant application: scale-out top-of-rack and spine switches. This is the version of CPO entering the field today.

CPO for scale-up supernodes. Optical engines and fibre replace copper flyover cables for rack-to-rack connections within multi-rack computing units (Rubin Ultra NVL576). The $324k scale-up CPO line item in 2027–2028 sits here.

CPO directly with the XPU (GPU / CPU). Optical engine co-packaged with the accelerator itself, bypassing the switch entirely. Highest integration, highest bandwidth potential. Multiple vendors in early development; commercial timing remains uncertain.

The economic case for CPO is most compelling at high bandwidth densities where pluggable modules reach their physical limits. At 6.4T and 12.8T speeds, the constraints of pluggable optical form factors — power, size, heat — become prohibitive, making CPO not merely preferable but practically necessary.

CPO does carry real trade-offs. It requires supply-chain migration at the system level rather than simple module replacement. Maintenance complexity is higher: while a failed pluggable module can be swapped in minutes without disrupting the switch, a failed CPO optical engine in a switch-integrated design may require replacing the switch PCB. Reliability engineering for CPO systems is consequently more demanding, and the coexistence of pluggable modules and CPO is expected to persist across all major use cases for the foreseeable future.

| Vendor | Current status | Approach |

|---|---|---|

| NVIDIA | CPO switch commercially available, 2026 (scale-out). Investment in optical interconnect sector disclosed at $6bn (Q1 2026). | Micro-ring modulator (MRM) — higher density and integration efficiency. |

| Broadcom | 102.4T CPO switch in customer delivery. 51.2T Bailly delivered Mar 2024; Davisson (102.4T) delivered to customers Oct 2025. | Mach-Zehnder modulator (MZM) — mature, with ongoing MRM development. |

| Marvell | CPO Ethernet switch sampling targeted 2027. Acquired Celestial AI (CPO for XPUs) in Feb 2026. | CPO designed to integrate with custom XPU platforms for cloud service providers. |

| Ranovus / MediaTek | CPO for ASIC in qualification. Odin CPO solutions (6.4T) announced with MediaTek ASIC platform. | CPO integration directly with application-specific XPU designs. |

Silicon photonics: displacing the incumbent technology

Within pluggable optical modules — which remain the dominant form factor for scale-out AI networking and will continue to co-exist with CPO — a technology transition is under way from electro-absorption-modulated lasers (EML) to silicon photonics (SiPh). This shift has significant implications for bill-of-materials costs, gross margins, and supply-chain positioning.

Silicon photonics offers three structural advantages over traditional EML-based modules: higher integration density and smaller physical footprint; lower power consumption through reduced component count; and lower manufacturing cost at scale. These advantages compound as data rates rise — silicon photonics offers a 32% bill-of-materials cost advantage and a 20% price advantage at 1.6T versus EML equivalents.

Silicon photonics share of optical module shipments is projected to rise from ~6% in Q1 2024 to ~46% by Q4 2028. The migration is non-linear: it accelerates as 1.6T becomes mainstream and 3.2T sampling begins, since the cost advantage widens at higher speeds.

For long-distance connections where continuous-wave lasers struggle to deliver sufficient power, EML retains a performance advantage. For network operators prioritising proven reliability on critical AI infrastructure where GPU costs dwarf optical module costs, EML's established track record remains a relevant consideration. The result is co-existence rather than a clean replacement cycle.

For suppliers, the silicon photonics transition is margin-positive. As the product mix shifts toward higher-speed SiPh-based modules — benefiting from lower laser input costs — optical module supplier gross margins are projected to expand toward 48–55%, driven by this mix migration.

Optical circuit switches: the all-optical frontier

Optical circuit switches — OCS — represent the most architecturally disruptive technology in the AI networking stack. Unlike conventional switches that require optical-electrical-optical signal conversion at every hop, OCS operates entirely in the optical domain, routing light signals directly from input to output fibre without any electronic conversion.

The strategic appeal for AI data centres is compelling. An OCS switch passes 800G, 1.6T, and 3.2T signals identically — it does not "see" the data rate, only the light. This means an AI cluster can upgrade its compute platform without replacing its switching infrastructure. In an environment where data rates double every 12–18 months, the ability to field-upgrade clusters without switch replacement is operationally and economically significant.

OCS commercial momentum is building rapidly. Leading optical component suppliers have disclosed OCS order backlogs in the hundreds of millions of dollars as of early 2026, with engagement across more than ten hyperscale customers apiece. The technology has already been deployed at scale in leading hyperscaler AI supercomputer platforms. The industry consortium driving OCS standardisation includes most major AI platform vendors and optical component companies.

Speed migration: 800G → 1.6T → 3.2T

Data-rate migration is the engine of blended average selling price growth in optical modules. As higher-speed modules displace lower-speed ones in deployment, the revenue pool expands even without volume growth — and volume is growing rapidly as well.

| Speed | 2024 | 2025 | 2026 | 2027 | 2028E |

|---|---|---|---|---|---|

| 400G | Mainstream | Mainstream | Declining | Declining | Legacy |

| 800G | Sampling | Ramping | Mainstream | Mainstream | Fading |

| 1.6T | — | Verify | Sample → mass | Mass volume | Mainstream |

| 3.2T | — | — | — | Verify → sample | Ramping |

The 1.6T migration is the critical transition for the 2026–2027 period — moving from mainstream 800G to a generation that doubles per-port bandwidth. The Vera Rubin platform doubles the optical module attach ratio per GPU from 1:2–3 to 1:4–6, compounding the volume effect of the speed upgrade. 3.2T, while still in early verification stages in 2026, will be the standard for Rubin Ultra scale-out connections in 2027–2028.

This migration cycle is expected to be lengthened by China cloud infrastructure deployments, which tend to lag Western hyperscaler platforms by 12–18 months but represent substantial incremental demand for each speed tier as they upgrade.

Light-source supply: a structural bottleneck through 2027

The most acute near-term constraint in the optical networking supply chain is not the optical module itself — it is the laser light source that powers it. Supply of both EML and continuous-wave (CW) laser components remains very tight in 2026 and is expected to persist through 2027 before reaching a more balanced state in 2H 2028.

Strong demand from AI server ramp-up, speed migration, and optical connection expansion combines with InP substrate supply constraints and geopolitical / export-control pressures on key materials. Capacity expansion is under way but not yet sufficient.

Capacity expansions announced across major suppliers begin to come online. Demand remains elevated as Rubin Ultra deployments accelerate. Supply-demand balance improves but remains favourable for component suppliers.

Post-expansion capacity meets moderating demand growth as AI server specification upgrade cadence stabilises and the industry shifts increasingly toward inference from training. Normalisation of pricing likely.

The supply constraint is driven by three reinforcing factors. First, InP (indium phosphide) substrate — the foundational material for both EML and CW lasers, used in photo-detectors (receivers) and laser diodes (transmitters) — has limited production capacity globally and is subject to geopolitical tension. Second, qualification and ramp-up timelines for new capacity are measured in years, not months. Third, demand is simultaneously increasing across multiple dimensions: more ports per rack, higher data rates per port, and more racks deployed.

The practical implication for investors is that the extended period of supply tightness creates a structural pricing advantage for laser and optical-engine suppliers that cannot easily or quickly be competed away. This is the "buy out the store before the storm" dynamic characteristic of AI supply chains — and laser components are one of the last major items in the stack where that dynamic remains fully operative.

TAM trajectory: from $15bn to $154bn

Combining scale-up and scale-out, the addressable market for AI networking is projected to expand from approximately $15bn at the GB300 stage to $154bn at peak Rubin Ultra deployment. The CPO sub-segment alone moves from a rounding error in 2026 to ~$70bn of annual TAM in 2028.

| Category | 2026E | 2027E | 2028E |

|---|---|---|---|

| Scale-up TAM | $7,228m | $30,697m | $82,837m |

| Scale-out TAM | $10,921m | $27,023m | $39,835m |

| of which: CPO components (scale-up + scale-out combined) | |||

| CPO total (optical engines, ELS, fibre, shufflebox) | $1,024m | $24,840m | $70,881m |

| — Optical engine & FAU | $864m | $16,269m | $43,858m |

| — ELS (external laser source) | $108m | $2,763m | $7,961m |

| — Fibre & MPO | $10m | $4,734m | $15,965m |

| — Shufflebox | $42m | $1,074m | $3,096m |

| Total TAM (scale-up + scale-out) | $18,149m | $57,720m | $122,672m |

Networking is no longer the supporting act

The AI infrastructure build-out has been framed — correctly — as primarily a story about compute. Chips, racks, power, cooling. These remain the dominant cost items and the primary focus of capital allocation and analyst attention.

But compute without connectivity is stranded capacity. As AI systems scale from tens of thousands of GPUs to hundreds of thousands to millions — and as the unit of AI computing shifts from the single rack to the multi-rack supernode — the networking layer transitions from infrastructure supporting compute to infrastructure that determines whether compute can function at its design potential.

The numbers are striking. Dollar content per computing unit is projected to increase 29-fold from the current generation to Rubin Ultra. The total addressable market is projected to expand ninefold to $154bn. Co-packaged optics — a technology that barely existed in commercial AI deployments 18 months ago — is projected to represent 59% of that peak market. These are not incremental improvements. They are a structural reordering of where value sits in the AI infrastructure stack.

Importantly, this growth is diversified across technology configurations. The investor concern that one configuration might cannibalise another is largely misplaced: all configurations — pluggable modules, CPO, copper, PCB — are expected to see strong absolute growth driven by the underlying expansion in rack deployments and bandwidth requirements. The question is less whether the market grows and more where within it structural advantages are durable.

Readers of our companion note on the AI build-out scenario framework will recognise the connection. The four supply-side variables that determine total AI capital — silicon service life, data-centre cost, chip architecture mix, build-out elongation — assume a workload mix that is itself shifting. The networking layer is one of the channels through which that shift expresses itself in capital terms.

Every AI chip requires connections. Every new generation requires exponentially more of them. Networking is not just an infrastructure trend — it is becoming one of the defining investment themes of the current technology cycle.

This report has been prepared by Lualdi Advisors for informational and educational purposes only. It draws on publicly available platform specifications, company disclosures, industry conference materials, and Lualdi Advisors' proprietary scenario modelling. All quantitative figures are illustrative scenario projections intended to support analytical discussion; they do not constitute forecasts, consensus expectations, or investment projections. Company and product references throughout this report are purely informational and illustrative; they do not constitute an endorsement, affiliation, or investment recommendation with respect to any issuer. This material does not constitute investment, legal, tax, or financial advice and should not be used as the basis for any investment decision. Lualdi Advisors makes no representations or warranties regarding the accuracy or completeness of the information contained herein. Past performance is not indicative of future results. Forward-looking statements are inherently uncertain and actual outcomes may differ materially from those described.